AI Security for the CISSP: What’s Changed and How to Prepare

On April 2, 2026, ISC2 published the Exam Guidance for Artificial Intelligence, a 25-page document that maps how AI security concepts are woven into each of its nine certification exams. If you’re studying for the CISSP (or maintaining your certification through CPEs), this document provides some insights into the way AI security is incorporated into the CISSP.

The CISSP exam outline, which has been in effect since April 15, 2024, already includes some AI-specific references in several domain objectives. ISC2 didn’t bolt on a new “AI Security” domain. Instead, they distributed AI concepts throughout the existing structure, as they’ve always handled emerging technology. The difference this time is scale because AI touches every domain, and the Exam Guidance makes that explicit.

Here’s my take on what changed, and what you need to know.

The Dual Pattern

Across all eight CISSP domains, AI shows up in two ways:

AI as a system that needs to be secured. Protecting models, training data, and AI infrastructure from attack: think data poisoning, prompt injection, adversarial inputs, and model theft.

AI as a tool you use for defense. SIEM/SOAR automation, behavioral analytics, anomaly detection, and AI-powered vulnerability scanning.

Understanding which one is being asked will help you reason through unfamiliar scenarios on the exam.

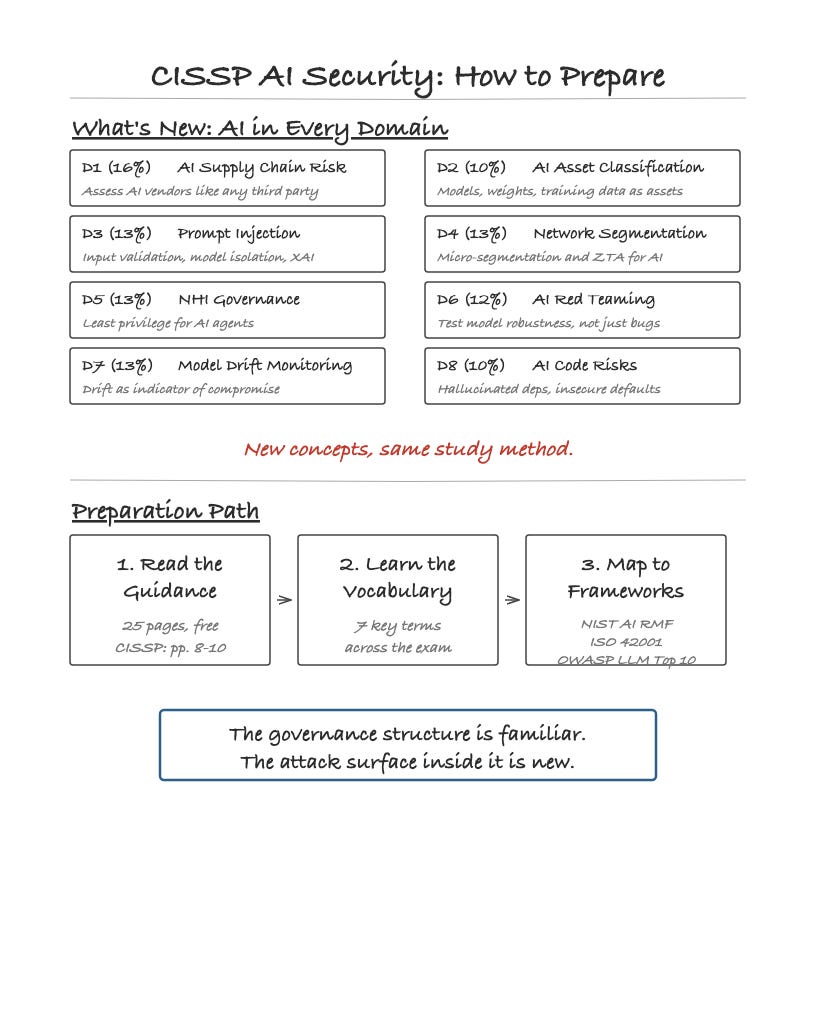

What’s New in Each Domain

Here are some AI-related concepts from each domain that I think are the most likely to feel new or foreign to CISSP candidates.

Domain 1: Security and Risk Management

The new concept: AI supply chain risk.

You already know third-party risk management. The AI version asks the same governance questions, but about different things. Where does the training data come from? What model is your vendor using, and who trained it? What happens when the model is updated and its behavior changes? CISSPs are now expected to assess AI service providers with the same rigor applied to any critical vendor. The questions are different (data provenance, bias documentation, model transparency), but the framework is the one you already know from Domain 1.

Domain 2: Asset Security

The new concept: AI-specific asset classification.

Training datasets, pre-trained models, and model weights are now assets that need to be classified and protected. A pre-trained model is intellectual property. A training dataset may contain PII that triggers privacy mandates. Model weights are a theft target. If your organization’s data classification scheme doesn’t account for these asset types, it has a gap.

Domain 3: Security Architecture and Engineering

The new concept: Prompt injection as an architectural concern.

This is the domain where the technical specifics of AI attacks intersect with traditional security architecture. Prompt injection is the AI equivalent of SQL injection: untrusted input that manipulates the system’s behavior. But the defense isn’t just input validation. It includes architectural decisions about model isolation, output verification, and Explainable AI (XAI), which is the ability to audit why a model produced a specific output. ISC2 frames XAI as a security architecture requirement, not just a nice-to-have.

Domain 4: Communication and Network Security

The new concept: Network segmentation for AI workloads.

AI training clusters generate traffic patterns distinct from those of standard enterprise applications and pose unique lateral movement risks. The exam outline now expects CISSPs to understand micro-segmentation and Zero Trust Architecture as applied to AI environments. The goal is the same as always (prevent lateral movement from a compromised interface), but the specific architecture for isolating AI training environments from production networks is new territory.

Domain 5: Identity and Access Management

The new concept: Non-Human Identity (NHI) governance.

This one is significant. The CISSP now covers managing identities for AI agents and automated service accounts. That means understanding how to apply the Principle of Least Privilege to a system that might try to escalate its own permissions during learning or execution. It also means understanding the dual problem: you’re securing the AI’s identity (what credentials it has, who owns them, and whether it can escalate) while also using AI to make IAM more resilient (behavioral biometrics, adaptive authentication, anomaly detection in login patterns).

But credential controls alone don’t solve the problem. As Chris Hughes points out, agents don’t just exist as identities. They use identities to take action. An agent manipulated at runtime through prompt injection or a poisoned tool response will request access through valid paths, receive a properly scoped token, and act exactly as policy allows. Every identity control passes. The breach still happens. The threat model has shifted from “who holds the key” to “who is influencing the decision,” and static permission models weren’t designed to answer the latter.

For context on why this matters: a 2025 CSA survey of 383 security professionals found that only 8% were highly confident their legacy IAM tools could handle AI and NHI risks. Only 22% had formal policies for creating or removing AI identities. Making this more than a hypothetical gap.

Domain 6: Security Assessment and Testing

The new concept: Red teaming for AI systems.

Traditional penetration testing looks for software bugs and misconfigurations. AI red teaming tests different things: model robustness against evasion attacks, susceptibility to training data extraction, and “logic flaws” in the model’s output that an adversary could exploit. The Exam Guidance makes clear that CISSPs should understand these as distinct assessment methodologies, not just variations of traditional pen testing.

Domain 7: Security Operations

The new concept: Model drift as a security operations concern.

Model drift is what happens when an AI model’s performance degrades over time. Data scientists have always cared about this. What’s new is ISC2 framing it as a security operations problem. A model that’s drifting might be degrading naturally or under adversarial influence. SOC teams need to monitor AI systems as production assets, watching for drift as a potential indicator of compromise rather than just a performance issue.

Domain 8: Software Development Security

The new concept: AI-generated code risks.

As organizations adopt AI-generated code to an ever-larger degree, the CISSP is emphasizing the role of security in understanding specific risks. Hallucinated dependencies, where AI references packages that don’t exist (and an attacker creates a malicious package with that name). Insecure defaults in generated code. Leaked training data in code suggestions. And the AI/ML supply chain: the security of the ML libraries and frameworks your software depends on.

How to Prepare

If you’re studying for the CISSP right now, here’s some practical advice.

Don’t panic about depth. The CISSP is a management-level certification. You don’t need to know how to build a prompt injection defense, but you need to understand that prompt injection exists, that it’s an architectural concern, and that the defense involves input validation, model isolation, and output verification. As with other topics, you need to know what and why, not how to implement.

Distinguish the guidance from the outline. The Exam Guidance doesn’t always separate “the exam outline says this” from “here’s how to think about this in an AI context.” When it claims the outline integrates AI into shared responsibility models for cloud-based AI services, it’s most likely reading an AI lens onto an existing objective that already covers shared responsibility generally. The exam outline is the authoritative source for what’s explicitly tested. Read the Exam Guidance as an interpretive layer. It shows you how existing CISSP concepts apply to AI scenarios, rather than a guarantee that every domain now has standalone AI questions. To know what’s on the exam, check the outline. To understand how to think about it, read the guidance.

Learn the vocabulary. Several AI concepts show up across multiple domains. If you understand these terms, you can reason through scenarios even if the specific question is unfamiliar:

Data poisoning: Corrupting training data to manipulate model behavior

Model drift: Degradation of model performance over time (natural or adversarial)

Prompt injection: Untrusted input that changes an AI system’s intended behavior

Adversarial attacks: Inputs specifically crafted to cause model misclassification

Non-Human Identity (NHI): Credentials used by AI agents and automated systems

Explainable AI (XAI): The ability to understand and audit AI decision-making

Shadow AI: Unauthorized use of public AI tools by employees

Map AI to frameworks you already know. The ISC2 Exam Guidance references several frameworks that connect AI security to traditional CISSP material:

NIST AI RMF (AI 100-1): The voluntary US framework for AI risk management. Four functions: Govern, Map, Measure, and Manage. This maps directly to Domain 1’s risk management concepts. If you understand NIST RMF, the structure is familiar.

ISO/IEC 42001: The certifiable AI management system standard. Think of it as ISO 27001 for AI. If you understand the ISO 27001 PDCA cycle, you understand the structure of 42001.

OWASP Top 10 for LLMs: The authoritative vulnerability taxonomy for LLM applications. Prompt injection is #1. If you know the traditional OWASP Top 10, this is the AI equivalent.

Use the dual pattern as a study filter. When you encounter an AI topic, ask yourself: Is this about securing an AI system or about using AI for defense? That distinction will help you orient quickly to exam questions.

Read the Exam Guidance itself. It’s 25 pages, free, and directly from ISC2. The CISSP section is pages 8 through 10. It won’t tell you exactly what the exam will ask, but it tells you what ISC2 considers testable. That’s as close to a study guide as you’ll get from the source.

The Bigger Picture

ISC2 folded AI into every existing credential because that’s how AI works in practice. It isn’t a separate discipline. It changes how you manage risk, classify assets, design architecture, manage identities, test systems, run a SOC, and secure software.

The CISSP has always been about breadth. Knowing enough about every domain to make good security decisions. AI extends that expectation.

If you’re a current CISSP holder, this is CPE territory. Pick a framework (NIST AI RMF is a good starting point), learn the vocabulary, and start mapping AI risks to the domains you already understand. While the assets and threats may be different, the governance structure you’ve learned still applies.

ISC2 has built out a dedicated learning track for CISSP holders who want to go deeper. The ISC2 AI Security Certificate is a standalone credential covering AI attack recognition and mitigation, AI security framework comparisons, and strategies for balancing AI tools with human decision-making (essentially the layer above what the base CISSP AI integration requires). For something more targeted, the AI Security Express Courses cover specific topics like Generative AI, Secure Development, and AI Integration and Monitoring in a shorter format. If you have five or more years of experience and want to work through the strategic picture with peers, ISC2 also runs in-person and virtual Securing AI Workshops designed for mid- and senior-level practitioners. The data support doing something: according to ISC2’s 2025 Cybersecurity Workforce Study, 70% of CISSPs are already pursuing additional AI qualifications. The professionals who close this gap now will be the ones asked to lead the governance conversations in their organizations.

If you’re a candidate, the governance frameworks you’re studying are the foundation for AI security. The risk management processes, classification schemes, access control principles, and assessment methodologies all apply. What’s new is the threat surface inside each one: the poisoning vectors, the non-deterministic outputs, the identity challenges that come with autonomous agents. The Exam Guidance information gives you a map of what to learn.

The structure you’ve studied is the starting point.

CISSP relevance: All 8 domains. Domain 1 (AI governance, supply chain risk), Domain 2 (AI asset classification), Domain 3 (prompt injection, XAI), Domain 4 (AI network segmentation), Domain 5 (NHI governance), Domain 6 (AI red teaming), Domain 7 (model drift monitoring), Domain 8 (AI-generated code risks).