ORIGINAL-Inside the NIST AI Risk Management Framework

The NIST AI Risk Management Framework is the US government's recommendation for organizations seeking a structured approach to AI risk. It was published in January 2023, mandated by the National AI Initiative Act of 2020, and developed through a public consultation process that ran through early 2023.

The framework is voluntary and non-certifiable. Nobody can audit you against it, and you can self-claim alignment, which is where ISO 42001 comes in as the certifiable counterpart. What RMF gives you is a shared vocabulary.

NIST also publishes a companion document called the AI RMF Playbook. The framework itself is about 40 pages of principles. The Playbook runs over 140 pages of suggested actions, transparency questions, and reference resources for each piece of the framework. If you only read the framework, you get the abstractions, while most of the operational guidance is in the Playbook.

This article walks through the four functions at the heart of the framework, using Playbook content to sharpen what each function actually requires.

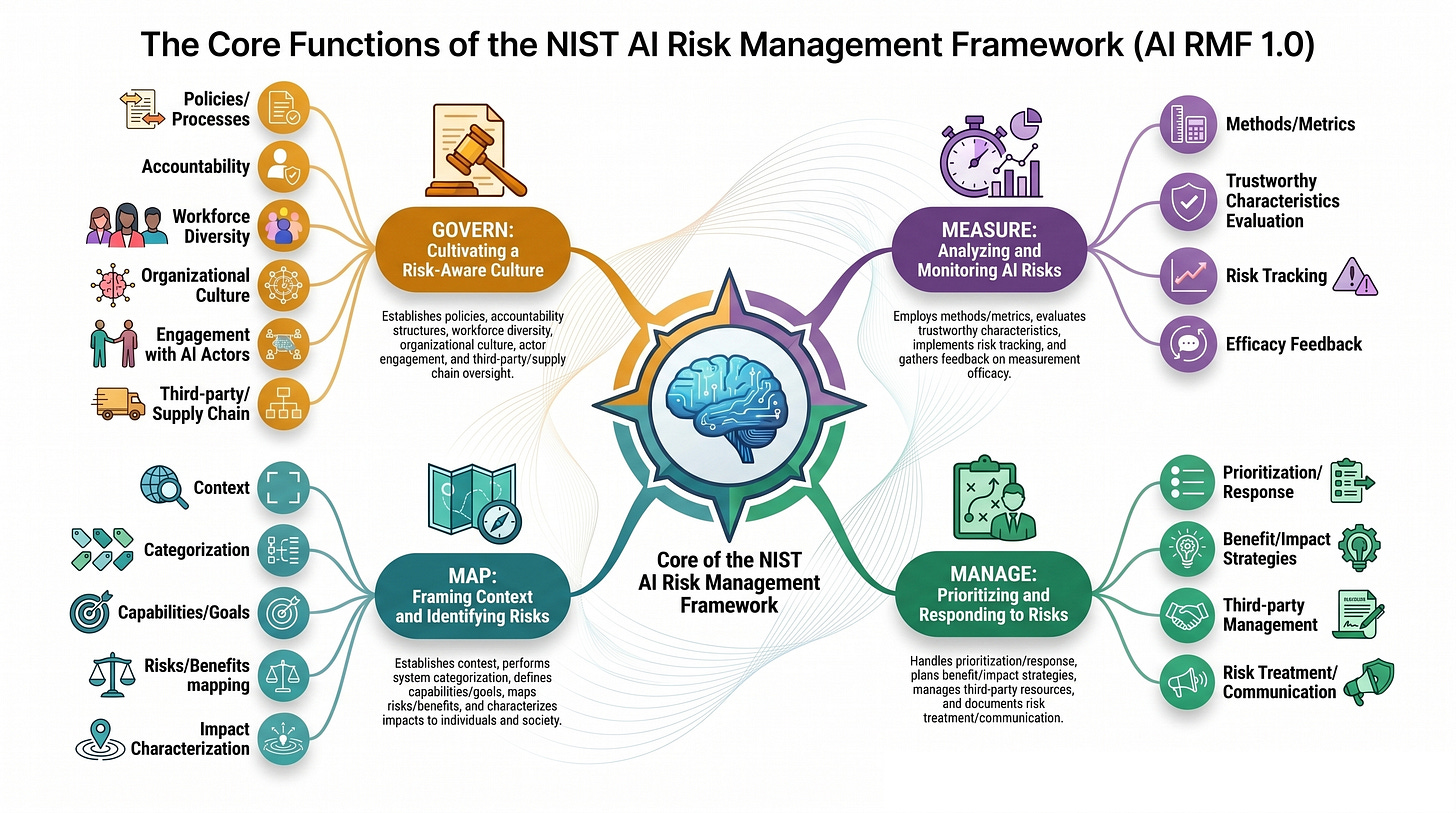

The four functions at a glance

NIST AI RMF organizes everything around four functions: GOVERN, MAP, MEASURE, and MANAGE. They aren’t sequential steps. They’re roles in a system that runs continuously.

GOVERN sits across the whole framework. It’s the organizational substrate: policies, accountability, culture, and oversight that make the other three functions possible. MAP, MEASURE, and MANAGE, then run in a loop. MAP establishes the context for understanding a specific AI system. MEASURE tests it against the trustworthiness characteristics NIST defines. MANAGE turns those measurements into prioritization decisions, kill-switch procedures, and disclosures to affected parties. The outputs of all three feed back into GOVERN, which uses them to update policies, roles, and culture over time. The framework is iterative, not linear.

GOVERN

GOVERN is where the framework starts and where most organizations underinvest. It’s the function that establishes who’s responsible for what, what risks the organization is willing to take, how AI work fits into existing accountability structures, and how culture supports raising concerns rather than burying them.